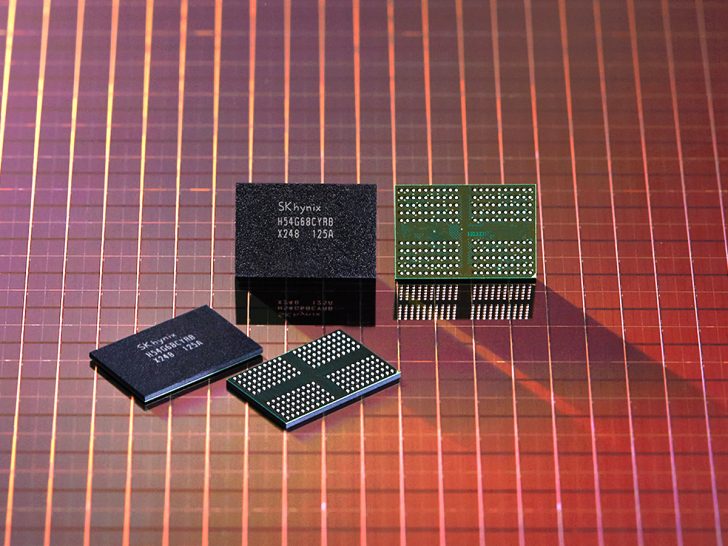

The increase in memory costs weighs on the CapEx of clouds, but NVIDIA benefits from an exclusive position in the supply chain of DRAM. THE hyperscalers continue to buy at a premium to support AI at scale, without slowing down their deployments.

30% of your CapEx in 2026

D’après SemiAnalysisthe memory share increased from around 8% of the budget to CY23 et CY24 almost 30% expected in CY26with a further increase likely in CY27. Shortages persist, but spending is not slowing. Clouds buy spot or contract, for lack of alternative, to support training, inference and IA générative.

Memory is taking over Hyperscaler CapEx.

In CY23 and CY24, memory was ~8% of total Hyperscaler spend. We estimate it hits 30% in CY26 and moves higher in CY27. That’s a near-4x shift in just four years. (1/4) 🧵 pic.twitter.com/fUxpwUYfcO

— SemiAnalysis (@SemiAnalysis_) Avril 3, 2026

For operators, budgetary arbitration is becoming tougher. Memory eats into the server and network envelope, while remaining essential to keep the pace of GPU deployments and secure the performance expected by customers.

DDR5, LPDDR5 et CXL

Demand explodes with the adoption of DDR5 et LPDDR5and the establishment of memory pools linked in CXL rack-wide. Shortages are hitting the “consumer” DRAM markets hardest, cannibalized by the needs of data centers and their proprietary silicon projects.

Probable consequence: firm prices, increased pressure on integrators, and an advantage for players capable of optimizing memory usage and interconnections. Smaller clouds risk slower deployments, especially on inference and image generation.

Pounds:Â PNY Apparently Sends a GeForce RTX 5070 Ti as a Replacement for the RTX 5070

Statut VVP chez NVIDIA

According to SemiAnalysis, NVIDIA enjoys a very privileged “ VVP ” DRAM customer status, with superior capacity and pricing leverage. The group has locked in long-term contracts, anticipating demand, as has already been highlighted Jensen Huang. Result: less direct impact of shortages.

The advantage is not limited to memory: semiconductors, advanced packaging and logistics form a barrier. To compete, you must deliver high-performance GPUs, a solid software ecosystem and secure upstream, otherwise there will be delays or prohibitive costs.

If DRAM climbs further, the gap between integrated leaders and pursuers could widen, with painful trade-offs for certain AI deployments.